Online dating has always relied on something fragile yet essential: a sense of authenticity. When a new match appears, users assume the person behind the screen is real, reachable, and genuinely represented by the photos and stories they’ve shared. Over the past year, however, dating platforms have felt a growing tension as AI-generated faces, scripted bios, and fully fabricated personas slip past moderation. The shift hasn’t been subtle. Product and operations teams are now facing a challenge that’s both technical and human: how do you maintain an environment where people feel safe connecting when synthetic identities can look as convincing as the real thing?

Researchers have been warning about this trend. A study from the British Psychological Society explored the risks AI poses in the dating world and highlighted how easily someone can build a profile that feels authentic to unsuspecting users. The message is clear: synthetic identities aren’t just a fringe phenomenon. They’re beginning to shape online interactions, and platforms can’t afford to wait for the situation to escalate before strengthening their defenses.

Fortunately, dating apps already have the building blocks for better protection. What they need now is a strategy that blends product design, technical safeguards, and thoughtful policy. And it begins with understanding how AI-made profiles operate.

AI Fake Profile Detection and Why It Matters for Dating Platforms

When teams talk about AI fake profile detection, they often focus solely on the photo. But synthetic profiles are rarely the result of a single trick. The images may be produced by diffusion models, the bio might be stitched together from large language model outputs, and the persona may be automated to message people in a way that mimics natural conversation.

This kind of fabrication doesn’t just waste users’ time. It weakens their confidence in the platform. A person who realizes they’ve been interacting with a manufactured identity – one created entirely by algorithms – won’t just close the app; they often share their frustration with friends, which reflects poorly on the product itself.

For teams serious about user retention, identifying synthetic profiles early becomes part of essential product maintenance, much like addressing security vulnerabilities or preventing payment fraud. But detection can’t depend solely on human moderators. The volume is too high, the images are too refined, and the patterns too subtle. Systems need to support moderators, not rely on them as the primary line of defense.

Why Manual Moderation Alone Falls Short

Smaller dating platforms often assume their moderators can catch most fake profiles. That was somewhat possible in the early days of AI-generated imagery. But as generative models improved, fake faces began matching real-life features with uncanny precision. Skin textures look natural, lighting appears consistent, and facial proportions fall within human norms. The old cues, like oddly shaped eyes or unbalanced reflections, are no longer reliable.

Meanwhile, AI-generated bios complicate things even further. They’re usually written in warm, relatable tones, and they highlight interests that feel balanced and believable. Paired with a polished face, the result is a profile that easily passes a casual inspection.

Expecting moderators to catch all of this is unrealistic and unfair to the people doing the job. Fatigue sets in quickly, and mistakes follow. What dating apps need instead is a system where the first layer of verification happens automatically, allowing humans to focus on cases where judgment and context matter.

How Image Verification APIs Strengthen Dating App Moderation

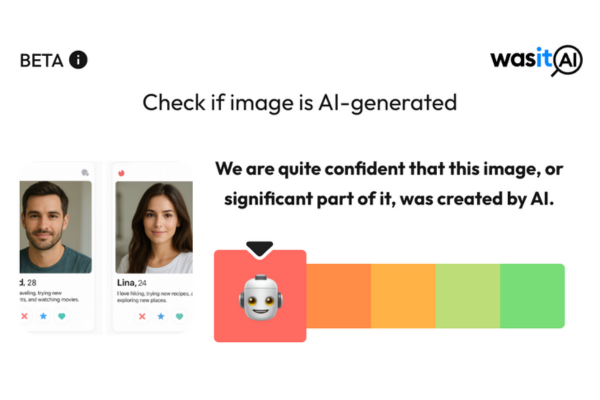

Integrating an image verification API into the onboarding workflow is one of the strongest moves a platform can make. These APIs analyze uploaded photos to determine whether they’re likely AI-generated or human-made. The process happens quietly in the background, adding almost no friction for new users.

For apps with selfie verification or ID checks, the integration is straightforward. For platforms with more casual onboarding, the API can run selectively – triggered only when certain behavioral or technical signals suggest an elevated risk.

This is where solutions like WasItAI come into play. Our service is designed to give dating platforms reliable signals when dealing with potentially synthetic imagery. While no single tool replaces broader moderation strategies, incorporating accurate image verification creates a stronger baseline for safety. And because the responsibility ultimately lies with the platform itself, tools like ours function as a support system, an essential component, but not the full answer.

How Smarter Policies Help Platforms Stay Ahead

Technical tools are only as effective as the policies built around them. Dating platforms make the biggest progress when they combine visual signals, text analysis, and behavioral monitoring into one consistent workflow.

For example, when a user uploads several new photos, each image should be checked for consistency. Does the face align with the verification selfie? Are there signs of stylization typical of modern generative models? If the verification API flags an image as high-risk, the system should route that profile for deeper review rather than letting it pass unexamined.

The tone of these policies matters. Platforms should frame verification as a way to keep the space safe and enjoyable. If a user’s photo doesn’t pass the check, asking them to retake it with clearer lighting feels constructive rather than punitive. On the other hand, repeated uploads of questionable images may call for restricting account activity until the situation is resolved.

Text analysis can support this too. Many AI-generated bios share patterns – certain sentence structures, generic positivity, or overly broad hobbies. Even without banning AI-written bios outright, platforms can use these indicators to inform risk scoring. When combined with image verification, the signals create a clearer picture of whether a profile is genuinely human.

The goal isn’t to complicate onboarding. It’s to give real users the sense that their interactions on the platform come from actual people, not manufactured personas.

A Real-World Example of How AI-Made Profiles Spread

A product lead at a midsize dating app recently described a troubling pattern. A group of new profiles appeared within just a few hours, each one seemingly normal. The faces were diverse and realistic. The bios were short but pleasant. Yet something felt off. After users began reporting odd interactions, the team noticed the conversations from these profiles were almost identical: friendly greetings, similar pacing, comparable phrasing.

Only then did they realize the faces were AI-generated and the messaging automated.

The team reworked its onboarding pipeline. Every photo began passing through an automated verification layer, with suspicious images flagged for review. As they tuned the process, reports dropped. And more importantly, user interactions started feeling more natural again.

Stories like this are becoming increasingly common. The platforms that move quickly to modernize their detection systems are the ones preserving the quality of their communities.

Building a Dating Environment Where Real People Can Connect Confidently

Real connections require a space where users feel secure. A dating app can ship new features every week, but if the environment feels artificial or manipulated, people will simply stop engaging. Detecting and preventing AI-made profiles isn’t just about blocking bots – it’s about keeping human interactions meaningful.

Image verification APIs help teams keep pace with advances in synthetic imagery. And services like WasItAI support platforms by providing reliable signals that integrate naturally into onboarding or moderation systems. But the broader responsibility lies with dating apps themselves. They set the standards, define the policies, and decide how seriously they take user safety.

The landscape is evolving quickly, but solutions exist. With clear policies, thoughtful moderation workflows, and updated detection methods, platforms can stay ahead of the problem and create an environment where real people can meet without worrying whether the face behind the screen is fabricated.